What You Should Know

Axios reported on March 30th, 2026, that venture capitalist David Sacks is involved in shaping a Trump-aligned AI agenda. The development lands as federal agencies, courts, and companies clash over how fast AI should move and who should police it.

Sacks, a prominent Silicon Valley investor and political megadonor, has floated into a policy lane where a few bullet points can steer billions in contracts, enforcement priorities, and national security restrictions.

Axios framed Sacks’ role as an attempt to put a coherent AI plan on paper for Trump-world, at a moment when tech leaders want fewer rules, Washington wants leverage over powerful models, and global competitors are not waiting for a committee vote.

Silicon Valley Writes a Federal Rulebook

The power move is not subtle. An administration’s AI posture can tilt federal procurement toward certain tools, set the tone for antitrust scrutiny, and determine whether agencies treat frontier models as ordinary software or as strategic infrastructure.

That puts Sacks in the middle of two incentives that do not always align: the public promise of American leadership and the private desire for speed, friendly regulation, and limited liability. If the same circle advising a candidate also holds equity across the sector, every policy choice gets read as both governance and portfolio management.

Biden’s Paper Trail, Trump’s Blank Space

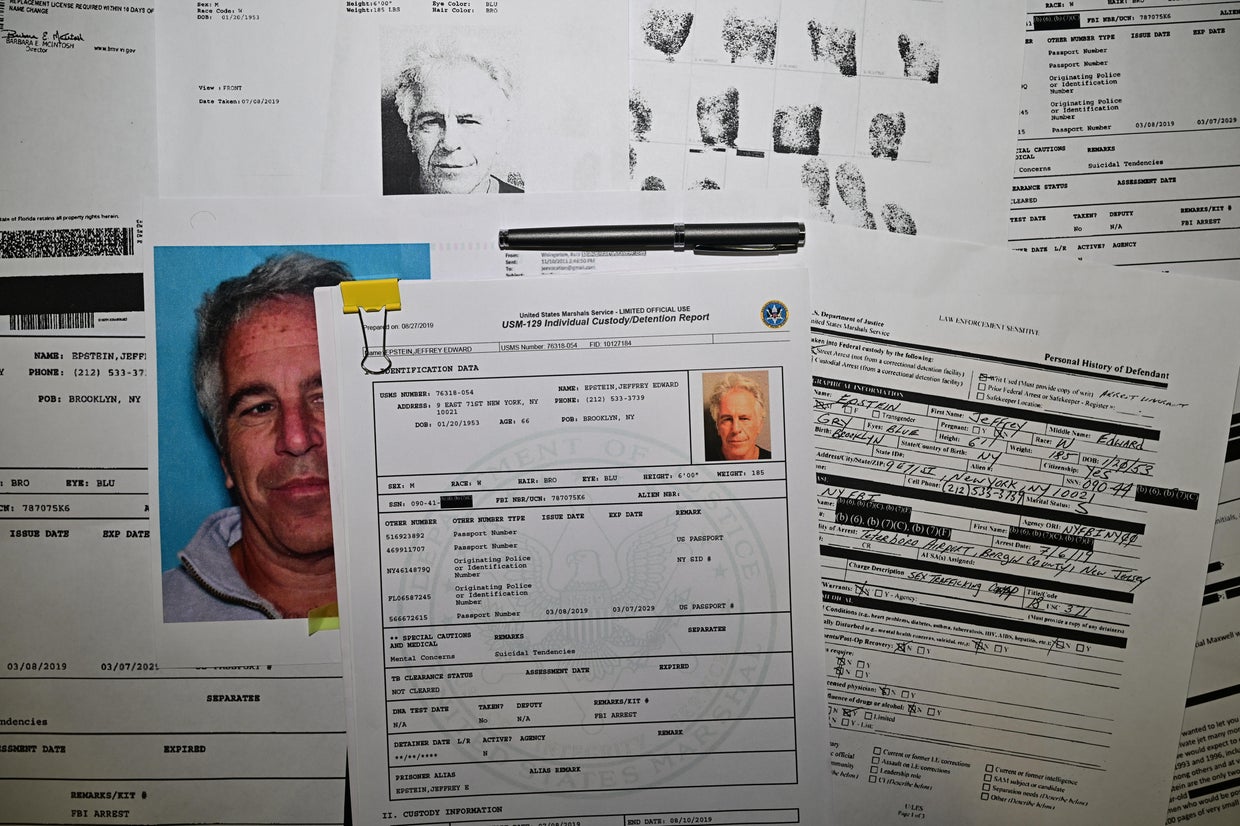

The contrast point is already on the record. On October 30th, 2023, President Joe Biden signed what the Federal Register lists as “Executive Order on the Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence,” a document that leaned on reporting requirements, standards work, and agency oversight rather than a pure accelerator strategy.

Trump, by comparison, has often talked about winning, beating China, and cutting red tape, but his AI blueprint has been less defined in official documentation. That vacuum is an opportunity for outside players to fill in details, then treat those details as the default plan once the campaign slogan fades.

Who Wins if the Referee Is an Investor

Three constituencies will be watching the next draft closely: AI companies facing copyright and safety lawsuits, federal agencies trying to set workable standards, and voters who will experience AI mostly through jobs, education, health care, and surveillance questions, not venture-capital talking points.

Also looming is the global chessboard. NIST has pushed an AI Risk Management Framework meant to normalize safety language across sectors, while U.S. export controls, chip supply chains, and model access debates keep pulling AI into national security territory. A weaker domestic regime could boost American firms, or it could hand adversaries a faster learning curve.

For Trump, letting a well-networked tech insider help sketch an AI agenda could look like efficiency. For critics, it could look like the regulated class holding the pen. The first version may be written far from Capitol Hill, but the consequences will land right on it.