Anthropic built one of the only AI systems cleared for classified networks. Now, it is in court, arguing that the same government that approved it is using a blunt national security label as a punishment tool.

What You Should Know

Anthropic filed a federal lawsuit challenging the Defense Department’s supply chain risk designation and President Trump’s directive to end government use of its technology. It also asked the D.C. Circuit to review the Pentagon action and sought an emergency stay.

The case, filed March 9th, 2026, lands at a messy intersection of contracts, classified tech, and a White House that wants maximum flexibility for military use. Anthropic says the government crossed a line. The Pentagon is treating the company like a vendor that failed a security test.

How a Classified AI Tool Became a Contract Kill Switch

According to CBS News, the dispute traces back to Anthropic’s efforts to impose guardrails on the military’s use of its model, Claude. The company wanted assurances that Claude would not be used for mass surveillance of U.S. citizens or to power lethal autonomous weapons. The Pentagon’s position was simpler: if a use is lawful, the tool needs to be available.

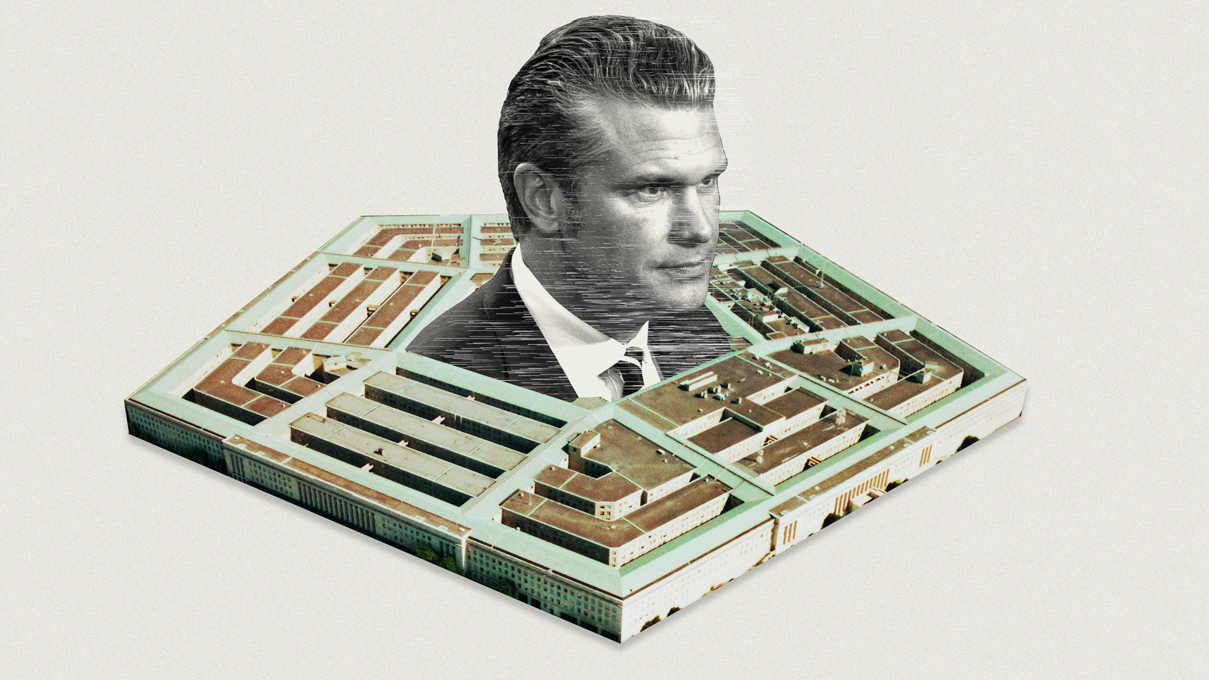

Those talks ran into a deadline of February 27th, 2026. Trump then announced he was ordering federal agencies to immediately stop using Anthropic’s technology, CBS reported. Defense Secretary Pete Hegseth followed with a plan to designate Anthropic a supply chain risk and phase out the tech across six months, cutting the company off from defense contracts.

Here is the awkward part: CBS News also reported that the Pentagon continued using Claude during the U.S.-Israeli war with Iran. If the model is too risky to buy, why is it still useful to run, even temporarily, in a live conflict context? That question is not the whole lawsuit, but it is the kind of detail that tends to matter when judges start asking what real security policy is and what leverage is.

The Lawsuit’s Big Swing: Retaliation and Authority

In a 48-page complaint filed in the U.S. District Court for the Northern District of California, Anthropic frames the designation and the wider cutoff as retaliation. One line in the filing is blunt: “The Constitution does not allow the government to wield its enormous power to punish a company for its protected speech.”

Anthropic is also fighting on a second front. CBS reports that the company filed a narrower case in the U.S. Court of Appeals for the District of Columbia, the venue federal law assigns for reviewing supply chain risk determinations, and sought an emergency stay on March 11th, 2026.

Why the Supply Chain Risk Label Hits Hard

The stakes are not abstract. Anthropic says government contracts are already being canceled, and it warns that private deals can wobble when the federal government brands a vendor a supply chain risk, putting hundreds of millions of dollars in near-term business in doubt. A Pentagon spokesperson declined to comment on pending litigation, CBS reported. The White House, meanwhile, defended the crackdown, with spokeswoman Liz Huston arguing the military will not be bound by what she described as a “woke” company’s terms.

Next comes the test that matters in Washington: whether courts treat this as a routine procurement and national security decision, or as an executive branch power play that used contracting authority to send a message to a high-profile tech supplier. Either way, every government-facing AI company is watching the definition of “supply chain risk” get road-tested in public.