The pitch that keeps getting sold to users is safety first. The story whistleblowers are telling, in documents, dashboards, and internal research, is that the real north star was the feed, and whoever could make it stickier.

What You Should Know

The BBC reports that more than a dozen whistleblowers and insiders say TikTok and Meta accepted safety risks while competing on recommendation algorithms. Meta and TikTok deny wrongdoing, calling the claims false or misleading.

According to BBC News, whistleblowers described internal decisions at TikTok and Meta that allegedly increased the amount of harmful content reaching users, even after company research flagged predictable risks tied to engagement-driven ranking.

The claims land at a moment when both companies make a similar promise: that their systems can surface entertaining content while controlling the worst stuff. The whistleblowers, speaking to the BBC documentary “Inside the Rage Machine,” describe a different operating reality shaped by competition, revenue, and political pressure.

The Incentive Problem, According to Insiders

At Meta, an engineer told the BBC that senior management pushed for more “borderline” content, including misogyny and conspiracy theories, to compete with TikTok. “They sort of told us that it’s because the stock price is down,” the engineer said.

A senior Meta researcher, Matt Motyl, told the BBC that Instagram Reels, Meta’s TikTok-style product, launched in 2020 without sufficient safeguards. Internal research shared with the BBC found Reels comments had a higher prevalence of bullying and harassment, hate speech, violence, or incitement than other areas of Instagram.

Another former senior Meta employee told the BBC that the company staffed up Reels with 700 hires while declining small staffing requests tied to protecting children and election integrity. The contrast points to the core power dynamic inside platforms: growth teams get headcount, safety teams argue for it.

Politics First, Kids Later

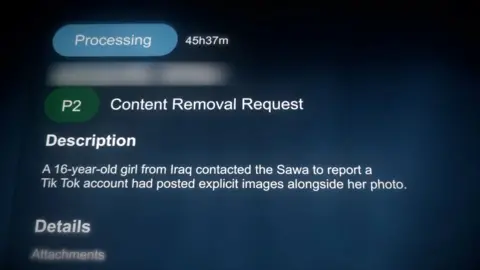

At TikTok, a staffer gave the BBC access to internal dashboards showing user complaints and triage. The staffer said cases involving politicians were prioritized over reports involving harmful posts featuring children, framing it as relationship management aimed at reducing regulatory threats.

Those allegations matter because they turn moderation from a policy question into a leverage question. If the queue bends around political sensitivity, then the people with the most influence do not just get their content boosted; they can also get their problems handled faster.

Denials, Documents, and the Next Fight

Both companies reject the whistleblowers’ framing. Meta told the BBC, “Any suggestion that we deliberately amplify harmful content for financial gain is wrong.” TikTok called the claims “fabricated” and said it invests in technology designed to keep harmful content from being viewed.

The deeper issue is that outsiders cannot easily test which story is closer to the truth, because recommendation systems are notoriously hard to inspect. Ruofan Ding, who worked on TikTok’s recommendation engine from 2020 until 2024, told the BBC that the algorithms are a “black box” whose internal workings are difficult to scrutinise.

If the whistleblowers’ accounts keep stacking up, the next battlefield is not just content policy, it is auditability, staffing priorities, and whether regulators can force visibility into the machine that decides what billions of people see.