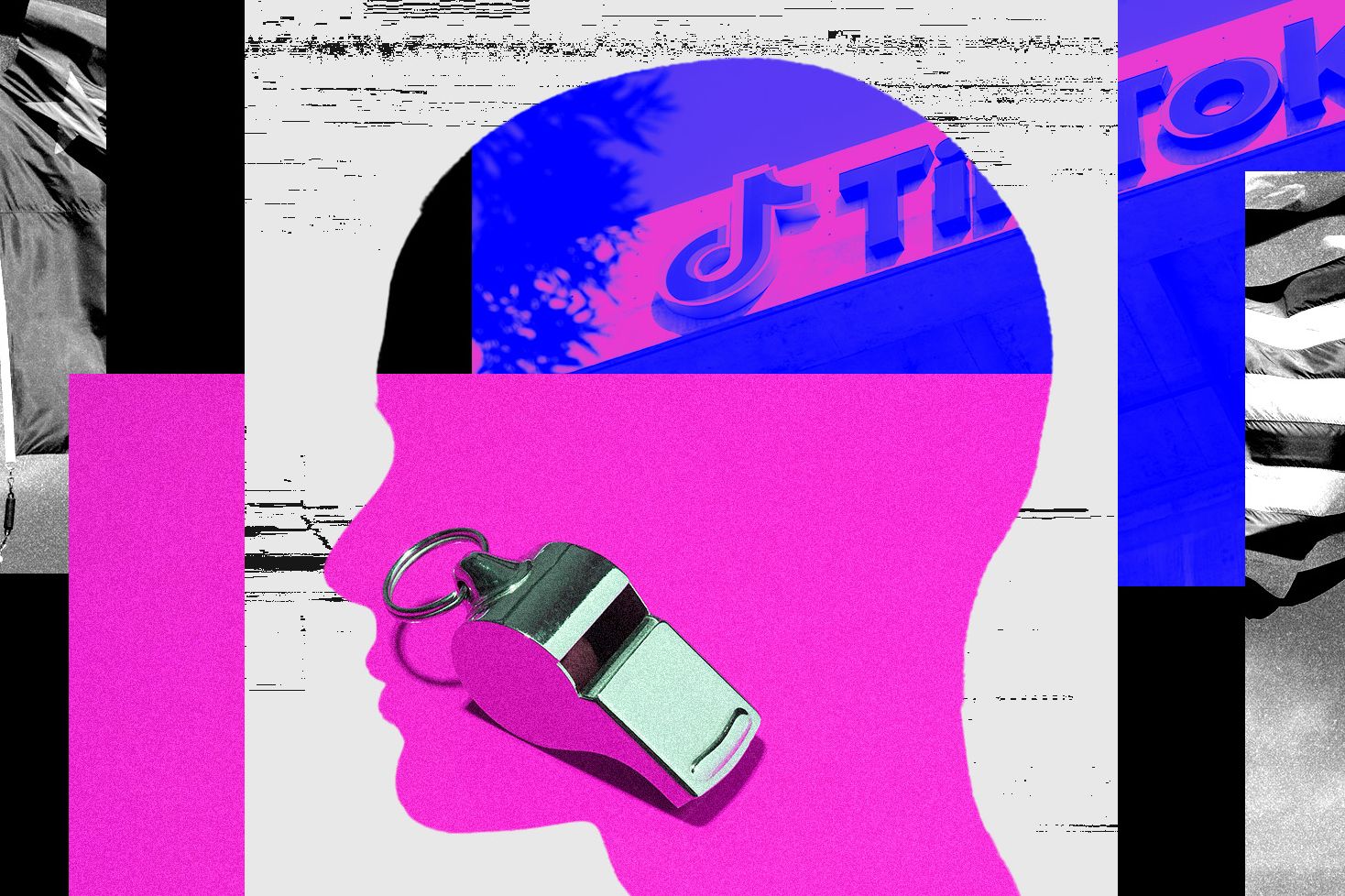

The fight for your attention is not just a product story. According to a new wave of insiders, it is a scoreboard where growth gets celebrated fast, and safety fixes wait in line.

What You Should Know

BBC News reports that multiple whistleblowers and insiders allege TikTok and Meta accepted safety risks while competing on recommendation algorithms. Meta and TikTok deny wrongdoing, calling suggestions of deliberate amplification or the core allegations false.

The claims come through BBC reporting tied to the documentary “Inside the Rage Machine,” which features more than a dozen whistleblowers and insiders describing how both companies weighed user harm against engagement in the post-TikTok era.

The Arms Race, According to the People Inside

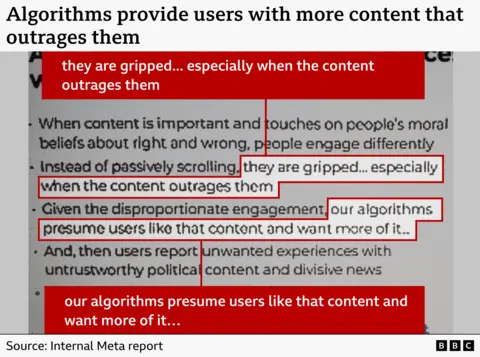

One Meta engineer told the BBC he was pushed to allow more “borderline” harmful material into feeds to compete with TikTok, describing content that could include misogyny and conspiracy theories.

His explanation for the pressure was blunt and money-coded. “They sort of told us that it’s because the stock price is down,” he said, according to the BBC.

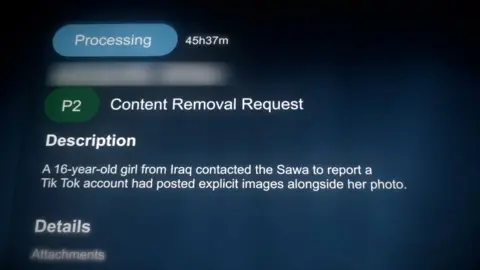

On the TikTok side, a staffer gave the BBC access to internal dashboards of user complaints and said the company prioritized some cases involving politicians, while reports involving harmful posts featuring children were treated as lower priority. The staffer described a push to maintain strong relationships with political figures to reduce the risk of regulation or bans.

Safety Budgets vs Growth Budgets

A former senior Meta employee told the BBC that while the company invested in hundreds of staff to grow Instagram Reels, requests for a small number of specialized safety hires were turned down, including roles focused on protecting children and election integrity.

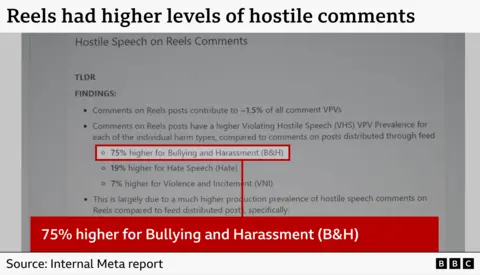

Matt Motyl, a senior Meta researcher cited by the BBC, said Reels launched in 2020 without sufficient safeguards, and internal research showed higher levels of bullying and harassment, hate speech, and violence or incitement in comments than elsewhere on Instagram.

Meta disputes the idea that it would trade harm for profit, telling the BBC, “Any suggestion that we deliberately amplify harmful content for financial gain is wrong.” TikTok, in the same reporting, called key allegations “fabricated claims” and said it invests in technology to prevent harmful content from being viewed.

What Happens When the Algorithm Is the Boss

The BBC reporting also surfaces a familiar problem for regulators and parents alike: the recommendation engine is often treated as a black box, even by the people building it. A former TikTok machine-learning engineer told the BBC the systems are difficult to make completely safe, and that engineers rely on separate safety teams to stop harmful posts before they get boosted.

What to watch next is not just whether the whistleblowers can produce more internal documents, but whether lawmakers treat this as a content-moderation argument or a product-design fight. The story is heading toward a simple question with expensive implications: who pays the price for engagement-first incentives?