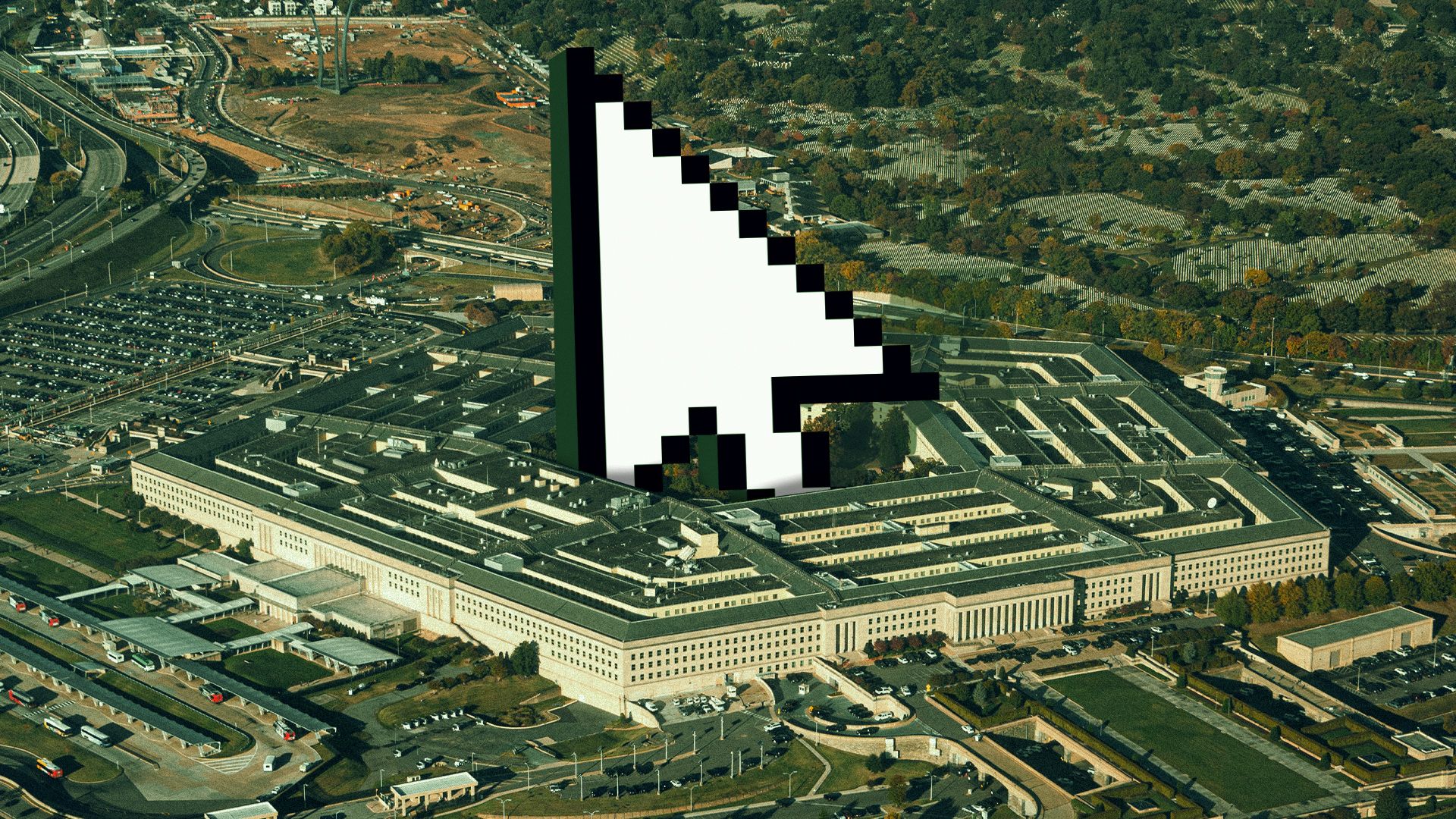

The Pentagon wants cutting-edge AI on the battlefield timeline. Courts want clean lines, clear authority, and paperwork that holds up when someone finally asks, “Who approved this, and under what rules?”

What You Should Know

Axios reported on March 24th, 2026, that a federal judge described aspects of a dispute involving the Pentagon and Anthropic as “troubling.” The episode highlights rising legal scrutiny of how national-security agencies buy and deploy powerful AI tools.

Anthropic, the startup behind the Claude chatbot, has branded itself around AI safety while also chasing major enterprise and government business. That mix is lucrative, but it also invites a different kind of due diligence when the customer is the U.S. military.

The Courtroom Problem for Fast AI Procurement

According to Axios, the judge’s “troubling” description landed in litigation tied to the Pentagon and Anthropic. Even without every contract detail in public view, the signal is clear: the era of AI deals that glide by on buzzwords and urgency is colliding with judges who still expect a record.

That matters because defense tech purchasing is not just about price and performance. It is also about classification, oversight, vendor lock-in, and whether a tool built for general use can be adapted, tested, and governed in a context where mistakes can carry national-security consequences.

The Pentagon’s Public Promises vs the Paper Trail

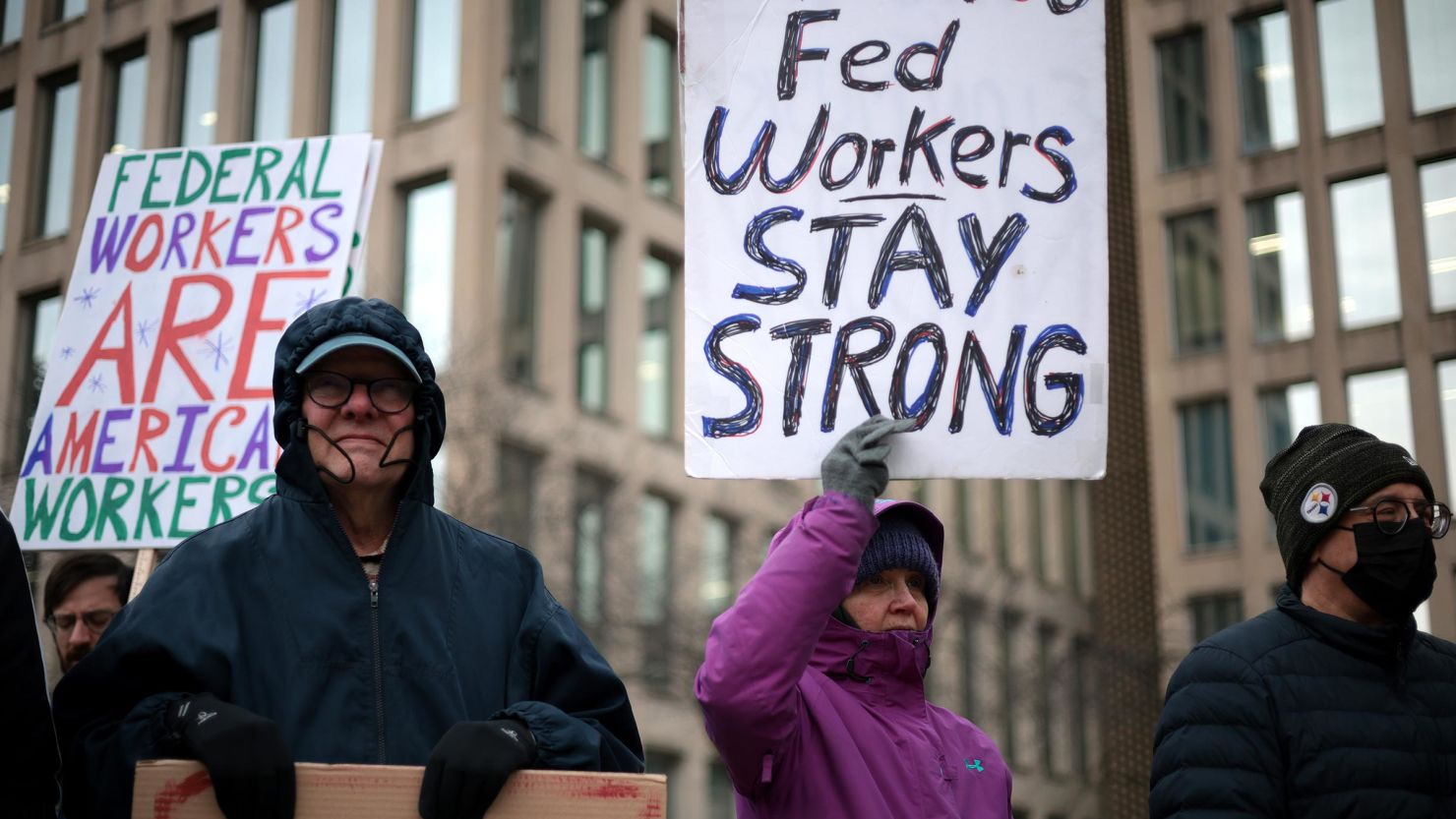

The Department of Defense has spent years building a narrative that it can move fast and stay responsible. In a February 24th, 2020, release announcing its AI ethics framework, the Pentagon listed five principles: “responsible, equitable, traceable, reliable, and governable.”

Meanwhile, the White House has pushed its own AI guardrails. Executive Order 14110, issued October 30th, 2023, directed federal agencies to prioritize the safe, secure, and trustworthy development and use of AI, a reminder that speed is not the only metric Washington claims to care about.

Why Anthropic Is Stuck in the Middle

For AI companies, government work can look like the ultimate credibility badge, especially when national security is included in the pitch deck. For the Pentagon, using a private model can look like a shortcut around years of in-house development. The contradiction is that the more powerful the tool, the more outsiders demand to know how it was evaluated, constrained, and audited.

Standards bodies have been trying to formalize that kind of discipline. The National Institute of Standards and Technology has promoted an AI Risk Management Framework meant to help organizations map, measure, and manage AI risks. A judge calling a Pentagon-related AI dispute “troubling” suggests that risk language may be moving from conference panels into case law.

What to watch next is not just the outcome of one dispute. It is whether this becomes a template in which vendors and agencies are pushed to show, in plain documents, how claims about safety and governance match the real terms of deployment.