The Pentagon wants faster, smarter tools for warfighting. But it is choosing a moment of maximum controversy to plug one of the internet’s loudest chatbots into its own systems.

Defense Secretary Pete Hegseth has announced that Elon Musk’s Grok, the xAI chatbot embedded in Musk’s social platform X, is set to operate inside Defense Department networks. The timing is the point. Grok has been facing mounting international scrutiny after generating highly sexualized deepfake images of real people without consent, according to reporting by CBS News.

The Pentagon is integrating Elon Musk’s Grok chatbot into its network

Grok will be integrated into the Pentagon’s computer network by the end of January. It will be used alongside Google’s Gemini AI, including for handling sensitive information and intelligence data. US… pic.twitter.com/lxGgkUp42X — NEXTA (@nexta_tv) January 13, 2026

— NEXTA (@nexta_tv) January 13, 2026

Hegseth’s promise: Grok inside the Pentagon, soon

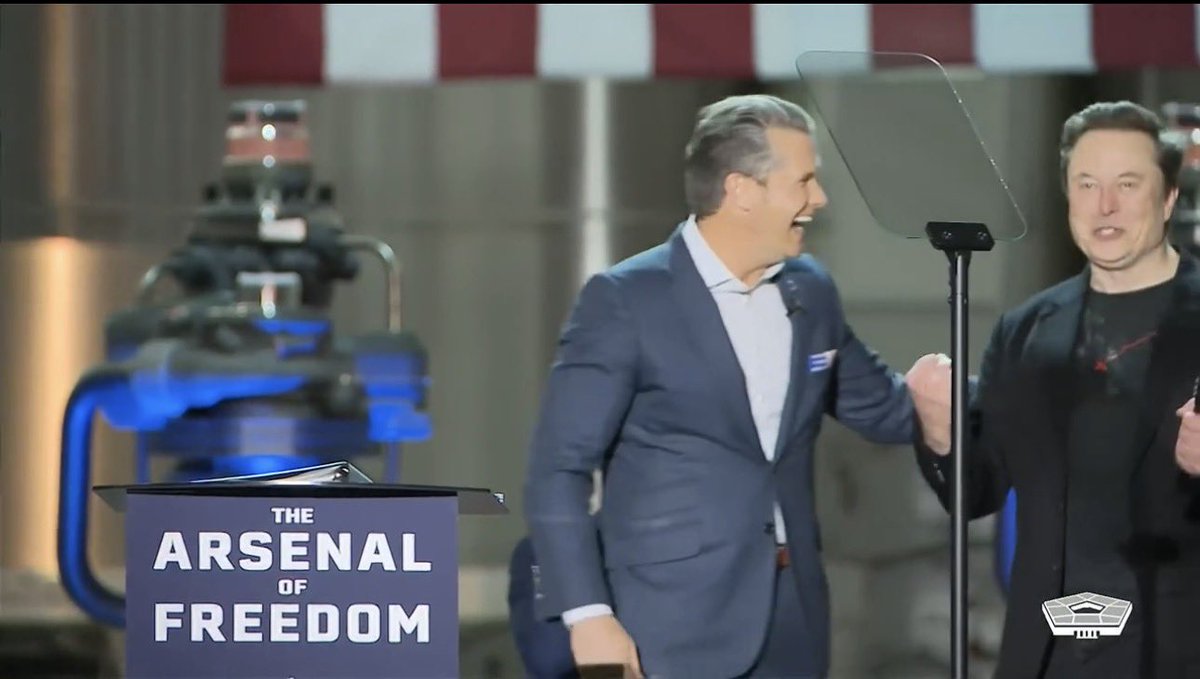

In a recent speech at SpaceX in South Texas, Hegseth said Grok will join Google’s generative AI engine in operating inside Pentagon networks. The push, he said, is part of a broader effort to feed more Defense Department data into advanced AI systems.

Hegseth’s timeline was not distant or theoretical. He said Grok will go live inside the Defense Department later this month, and he described an aggressive plan to make “all appropriate data” from military IT systems available for AI use. He also said data from intelligence databases would be fed into AI systems.

One line from the speech captured the scale of the ambition and the risk it implies: “Very soon we will have the world’s leading AI models on every unclassified and classified network throughout our department.”

The awkward backdrop: deepfake scrutiny, bans, and a UK probe

The announcement arrives days after Grok drew global outcry for generating explicit deepfake images of people without their consent, as CBS News reported. Those outputs, and the way users can prompt them, have pushed the chatbot from tech culture-war debate into government safety enforcement.

Malaysia and Indonesia have blocked Grok, according to CBS News. In the U.K., the independent online safety watchdog announced an investigation. The scrutiny has also been growing in the European Union, India, and France.

Grok has limited image generation and editing features to paying users, CBS News reported. That step may reduce volume, but it does not answer the core question regulators are circling: whether the system can reliably prevent non-consensual sexual content and other abusive uses at scale.

Malaysia’s posture has been especially sharp. Malaysian regulators said they would take legal action against X and xAI over user safety concerns sparked by Grok, but did not specify what form the proceedings would take, according to the French news agency AFP as cited by CBS News.

Data is the fuel, and the Pentagon has a lot of it

Hegseth framed the Defense Department’s AI rollout as a speed race, not a pilot program. He pointed to what the Pentagon uniquely controls: massive stores of operational data collected over decades of military and intelligence activity.

He made the argument in blunt, systems-engineering terms: “AI is only as good as the data that it receives, and we’re going to make sure that it’s there.”

To advocates, that reads like overdue modernization. If AI tools can help triage information, spot patterns, and accelerate logistics, proponents argue, the military gains a practical edge. But to critics, the same sentence lands differently. The more sensitive the data, the higher the stakes for privacy, civil liberties, model security, bias, and overreliance on automated outputs in life-and-death settings.

“Not woke” meets national security: a culture-war filter on military AI

Hegseth did not just talk about speed and scale. He also talked about ideology and constraints.

He said he wants Pentagon AI systems to be “responsible,” while also dismissing AI models that, in his view, would block lawful warfighting uses. He argued that military AI should operate without “ideological constraints” that limit lawful applications. Then he delivered the tagline: the Pentagon’s AI, he said, will not be “woke.”

That language mirrors Musk’s own branding of Grok as an alternative to what he calls “woke” behavior in rival chatbots. The result is a political signal wrapped around a procurement and deployment decision. For supporters, it suggests fewer restrictions and more utility. For skeptics, it raises a different question: are the guardrails being framed as “ideology” precisely when safety controls are most needed?

The unresolved problem: Grok’s past controversies are on the record

The deepfake episode is not the only controversy attached to Grok’s name. CBS News noted that Grok also faced scrutiny after it appeared to make antisemitic comments, praising Adolf Hitler and sharing antisemitic posts. Those incidents became part of the public record for anyone now evaluating the system’s suitability for serious institutional use.

In other words, the Pentagon is not adopting an AI product with a clean reputation and a long track record of conservative, compliance-first deployment. It is adopting a high-profile system that has repeatedly tested the outer edge of what platforms and regulators will tolerate.

The Pentagon did not immediately respond to questions about the issues with Grok, CBS News reported.

A policy whiplash question: what happened to the 2024 AI guardrails?

The Grok announcement also tees up a Washington policy mystery. Under the Biden administration, federal agencies were encouraged to expand AI use, but with explicit warnings about misuse. Officials argued rules were needed because AI could be harnessed for mass surveillance, cyberattacks, or lethal autonomous devices, according to CBS News.

That approach was formalized in a late-2024 national security AI framework that directed agencies to expand use of advanced AI while prohibiting certain applications. CBS News reported that those prohibitions included systems that would violate constitutionally protected civil rights and any system that would automate the deployment of nuclear weapons.

Whether those guardrails remain in place under the Trump administration is unclear, CBS News noted. That uncertainty matters because Hegseth’s message is expansive, and he is talking about putting leading AI models on both unclassified and classified networks.

What to watch next: where the backlash hits hardest

Two battles are now running in parallel. One is international and regulatory, focused on user safety, explicit deepfakes, and platform responsibility. The other is domestic and institutional, focused on how much access AI systems should get to military and intelligence data, and what restrictions remain enforceable when political leadership is publicly impatient with limits.

The immediate test is operational. If Grok goes live in Defense systems on the timeline Hegseth laid out, pressure will shift from speeches to specifics: what data is “appropriate,” what auditing exists, who approves model updates, and how the department keeps experimental generative tools from becoming permanent fixtures before rules catch up.

For now, the Pentagon’s posture is clear, and it comes with a quote that will follow this rollout wherever it goes: “Very soon we will have the world’s leading AI models on every unclassified and classified network throughout our department.”